Introduction to Linear Regression Analysis

John Wiley & Sons Inc (Verlag)

978-1-119-57872-7 (ISBN)

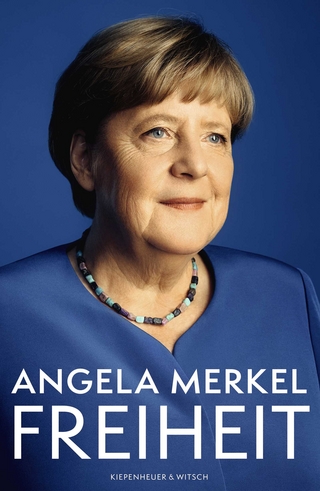

Introduction to Linear Regression Analysis, 6th Edition is the most comprehensive, fulsome, and current examination of the foundations of linear regression analysis. Fully updated in this new sixth edition, the distinguished authors have included new material on generalized regression techniques and new examples to help the reader understand retain the concepts taught in the book.

The new edition focuses on four key areas of improvement over the fifth edition:

New exercises and data sets

New material on generalized regression techniques

The inclusion of JMP software in key areas

Carefully condensing the text where possible

Introduction to Linear Regression Analysis skillfully blends theory and application in both the conventional and less common uses of regression analysis in today’s cutting-edge scientific research. The text equips readers to understand the basic principles needed to apply regression model-building techniques in various fields of study, including engineering, management, and the health sciences.

DOUGLAS C. MONTGOMERY, PHD, is Regents Professor of Industrial Engineering and Statistics at Arizona State University. Dr. Montgomery is the co-author of several Wiley books including Introduction to Linear Regression Analysis, 5th Edition. ELIZABETH A. PECK, PHD, is Logistics Modeling Specialist at the Coca-Cola Company in Atlanta, Georgia. G. GEOFFREY VINING, PHD, is Professor in the Department of Statistics at Virginia Polytechnic and State University. Dr. Peck is co-author of Introduction to Linear Regression Analysis, 5th Edition.

Preface xiii

About the Companion Website xvi

1. Introduction 1

1.1 Regression and Model Building 1

1.2 Data Collection 5

1.3 Uses of Regression 9

1.4 Role of the Computer 10

2. Simple Linear Regression 12

2.1 Simple Linear Regression Model 12

2.2 Least-Squares Estimation of the Parameters 13

2.2.1 Estimation of β0 and β1 13

2.2.2 Properties of the Least-Squares Estimators and the Fitted Regression Model 18

2.2.3 Estimation of σ2 20

2.2.4 Alternate Form of the Model 22

2.3 Hypothesis Testing on the Slope and Intercept 22

2.3.1 Use of t Tests 22

2.3.2 Testing Significance of Regression 24

2.3.3 Analysis of Variance 25

2.4 Interval Estimation in Simple Linear Regression 29

2.4.1 Confidence Intervals on β0, β1, and σ2 29

2.4.2 Interval Estimation of the Mean Response 30

2.5 Prediction of New Observations 33

2.6 Coefficient of Determination 35

2.7 A Service Industry Application of Regression 37

2.8 Does Pitching Win Baseball Games? 39

2.9 Using SAS® and R for Simple Linear Regression 41

2.10 Some Considerations in the Use of Regression 44

2.11 Regression Through the Origin 46

2.12 Estimation by Maximum Likelihood 52

2.13 Case Where the Regressor x Is Random 53

2.13.1 x and y Jointly Distributed 54

2.13.2 x and y Jointly Normally Distributed: Correlation Model 54

Problems 59

3. Multiple Linear Regression 69

3.1 Multiple Regression Models 69

3.2 Estimation of the Model Parameters 72

3.2.1 Least-Squares Estimation of the Regression Coefficients 72

3.2.2 Geometrical Interpretation of Least Squares 79

3.2.3 Properties of the Least-Squares Estimators 81

3.2.4 Estimation of σ2 82

3.2.5 Inadequacy of Scatter Diagrams in Multiple Regression 84

3.2.6 Maximum-Likelihood Estimation 85

3.3 Hypothesis Testing in Multiple Linear Regression 86

3.3.1 Test for Significance of Regression 86

3.3.2 Tests on Individual Regression Coefficients and Subsets of Coefficients 90

3.3.3 Special Case of Orthogonal Columns in X 95

3.3.4 Testing the General Linear Hypothesis 97

3.4 Confidence Intervals in Multiple Regression 99

3.4.1 Confidence Intervals on the Regression Coefficients 100

3.4.2 ci Estimation of the Mean Response 101

3.4.3 Simultaneous Confidence Intervals on Regression Coefficients 102

3.5 Prediction of New Observations 106

3.6 A Multiple Regression Model for the Patient Satisfaction Data 106

3.7 Does Pitching and Defense Win Baseball Games? 108

3.8 Using SAS and R for Basic Multiple Linear Regression 110

3.9 Hidden Extrapolation in Multiple Regression 111

3.10 Standardized Regression Coefficients 115

3.11 Multicollinearity 121

3.12 Why Do Regression Coefficients Have the Wrong Sign? 123

Problems 125

4. Model Adequacy Checking 134

4.1 Introduction 134

4.2 Residual Analysis 135

4.2.1 Definition of Residuals 135

4.2.2 Methods for Scaling Residuals 135

4.2.3 Residual Plots 141

4.2.4 Partial Regression and Partial Residual Plots 148

4.2.5 Using Minitab®, SAS, and R for Residual Analysis 151

4.2.6 Other Residual Plotting and Analysis Methods 154

4.3 PRESS Statistic 156

4.4 Detection and Treatment of Outliers 157

4.5 Lack of Fit of the Regression Model 161

4.5.1 A Formal Test for Lack of Fit 161

4.5.2 Estimation of Pure Error from Near Neighbors 165

Problems 170

5. Transformations and Weighting To Correct Model Inadequacies 177

5.1 Introduction 177

5.2 Variance-Stabilizing Transformations 178

5.3 Transformations to Linearize the Model 182

5.4 Analytical Methods for Selecting a Transformation 188

5.4.1 Transformations on y: The Box–Cox Method 188

5.4.2 Transformations on the Regressor Variables 190

5.5 Generalized and Weighted Least Squares 194

5.5.1 Generalized Least Squares 194

5.5.2 Weighted Least Squares 196

5.5.3 Some Practical Issues 197

5.6 Regression Models with Random Effects 200

5.6.1 Subsampling 200

5.6.2 The General Situation for a Regression Model with a Single Random Effect 204

5.6.3 The Importance of the Mixed Model in Regression 208

Problems 208

6. Diagnostics for Leverage and Influence 217

6.1 Importance of Detecting Influential Observations 217

6.2 Leverage 218

6.3 Measures of Influence: Cook’s D 221

6.4 Measures of Influence: DFFITS and DFBETAS 223

6.5 A Measure of Model Performance 225

6.6 Detecting Groups of Influential Observations 226

6.7 Treatment of Influential Observations 226

Problems 227

7. Polynomial Regression Models 230

7.1 Introduction 230

7.2 Polynomial Models in One Variable 230

7.2.1 Basic Principles 230

7.2.2 Piecewise Polynomial Fitting (Splines) 236

7.2.3 Polynomial and Trigonometric Terms 242

7.3 Nonparametric Regression 243

7.3.1 Kernel Regression 244

7.3.2 Locally Weighted Regression (Loess) 244

7.3.3 Final Cautions 249

7.4 Polynomial Models in Two or More Variables 249

7.5 Orthogonal Polynomials 255

Problems 261

8. Indicator Variables 268

8.1 General Concept of Indicator Variables 268

8.2 Comments on the Use of Indicator Variables 281

8.2.1 Indicator Variables versus Regression on Allocated Codes 281

8.2.2 Indicator Variables as a Substitute for a Quantitative Regressor 282

8.3 Regression Approach to Analysis of Variance 283

Problems 288

9. Multicollinearity 293

9.1 Introduction 293

9.2 Sources of Multicollinearity 294

9.3 Effects of Multicollinearity 296

9.4 Multicollinearity Diagnostics 300

9.4.1 Examination of the Correlation Matrix 300

9.4.2 Variance Inflation Factors 304

9.4.3 Eigensystem Analysis of XʹX 305

9.4.4 Other Diagnostics 310

9.4.5 SAS and R Code for Generating Multicollinearity Diagnostics 311

9.5 Methods for Dealing with Multicollinearity 311

9.5.1 Collecting Additional Data 311

9.5.2 Model Respecification 312

9.5.3 Ridge Regression 312

9.5.4 Principal-Component Regression 329

9.5.5 Comparison and Evaluation of Biased Estimators 334

9.6 Using SAS to Perform Ridge and Principal-Component Regression 336

Problems 338

10. Variable Selection and Model Building 342

10.1 Introduction 342

10.1.1 Model-Building Problem 342

10.1.2 Consequences of Model Misspecification 344

10.1.3 Criteria for Evaluating Subset Regression Models 347

10.2 Computational Techniques for Variable Selection 353

10.2.1 All Possible Regressions 353

10.2.2 Stepwise Regression Methods 359

10.3 Strategy for Variable Selection and Model Building 367

10.4 Case Study: Gorman and Toman Asphalt Data Using SAS 370

Problems 383

11. Validation of Regression Models 388

11.1 Introduction 388

11.2 Validation Techniques 389

11.2.1 Analysis of Model Coefficients and Predicted Values 389

11.2.2 Collecting Fresh Data—Confirmation Runs 391

11.2.3 Data Splitting 393

11.3 Data from Planned Experiments 401

Problems 402

12. Introduction to Nonlinear Regression 405

12.1 Linear and Nonlinear Regression Models 405

12.1.1 Linear Regression Models 405

12.1.2 Nonlinear Regression Models 406

12.2 Origins of Nonlinear Models 407

12.3 Nonlinear Least Squares 411

12.4 Transformation to a Linear Model 413

12.5 Parameter Estimation in a Nonlinear System 416

12.5.1 Linearization 416

12.5.2 Other Parameter Estimation Methods 423

12.5.3 Starting Values 424

12.6 Statistical Inference in Nonlinear Regression 425

12.7 Examples of Nonlinear Regression Models 427

12.8 Using SAS and R 428

Problems 432

13. Generalized Linear Models 440

13.1 Introduction 440

13.2 Logistic Regression Models 441

13.2.1 Models with a Binary Response Variable 441

13.2.2 Estimating the Parameters in a Logistic Regression Model 442

13.2.3 Interpretation of the Parameters in a Logistic Regression Model 447

13.2.4 Statistical Inference on Model Parameters 449

13.2.5 Diagnostic Checking in Logistic Regression 459

13.2.6 Other Models for Binary Response Data 461

13.2.7 More Than Two Categorical Outcomes 461

13.3 Poisson Regression 463

13.4 The Generalized Linear Model 469

13.4.1 Link Functions and Linear Predictors 470

13.4.2 Parameter Estimation and Inference in the GLM 471

13.4.3 Prediction and Estimation with the GLM 473

13.4.4 Residual Analysis in the GLM 475

13.4.5 Using R to Perform GLM Analysis 477

13.4.6 Overdispersion 480

Problems 481

14. Regression Analysis of Time Series Data 495

14.1 Introduction to Regression Models for Time Series Data 495

14.2 Detecting Autocorrelation: The Durbin–Watson Test 496

14.3 Estimating the Parameters in Time Series Regression Models 501

Problems 517

15. Other Topics in the Use of Regression Analysis 521

15.1 Robust Regression 521

15.1.1 Need for Robust Regression 521

15.1.2 M-Estimators 524

15.1.3 Properties of Robust Estimators 531

15.2 Effect of Measurement Errors in the Regressors 532

15.2.1 Simple Linear Regression 532

15.2.2 The Berkson Model 534

15.3 Inverse Estimation—The Calibration Problem 534

15.4 Bootstrapping in Regression 538

15.4.1 Bootstrap Sampling in Regression 539

15.4.2 Bootstrap Confidence Intervals 540

15.5 Classification and Regression Trees (CART) 545

15.6 Neural Networks 547

15.7 Designed Experiments for Regression 549

Problems 557

Appendix A. Statistical Tables 561

Appendix B. Data Sets for Exercises 573

Appendix C. Supplemental Technical Material 602

C.1 Background on Basic Test Statistics 602

C.2 Background from the Theory of Linear Models 605

C.3 Important Results on SS R and SS Res 609

C.4 Gauss-Markov Theorem, Var(ε) = σ 2 I 615

C.5 Computational Aspects of Multiple Regression 617

C.6 Result on the Inverse of a Matrix 618

C.7 Development of the PRESS Statistic 619

C.8 Development of S(i) 2 621

C.9 Outlier Test Based on R-Student 622

C.10 Independence of Residuals and Fitted Values 624

C.11 Gauss–Markov Theorem, Var(ε) = V 625

C.12 Bias in MSRes When the Model Is Underspecified 627

C.13 Computation of Influence Diagnostics 628

C.14 Generalized Linear Models 629

Appendix D. Introduction to SAS 641

D.1 Basic Data Entry 642

D.2 Creating Permanent SAS Data Sets 646

D.3 Importing Data from an EXCEL File 647

D.4 Output Command 648

D.5 Log File 648

D.6 Adding Variables to an Existing SAS Data Set 650

Appendix E. Introduction to R to Perform Linear Regression Analysis 651

E.1 Basic Background on R 651

E.2 Basic Data Entry 652

E.3 Brief Comments on Other Functionality in R 654

E.4 R Commander 655

References 656

Index 670

| Erscheinungsdatum | 15.06.2020 |

|---|---|

| Reihe/Serie | Wiley Series in Probability and Statistics |

| Verlagsort | New York |

| Sprache | englisch |

| Maße | 185 x 257 mm |

| Gewicht | 1247 g |

| Themenwelt | Mathematik / Informatik ► Mathematik |

| ISBN-10 | 1-119-57872-8 / 1119578728 |

| ISBN-13 | 978-1-119-57872-7 / 9781119578727 |

| Zustand | Neuware |

| Informationen gemäß Produktsicherheitsverordnung (GPSR) | |

| Haben Sie eine Frage zum Produkt? |

aus dem Bereich