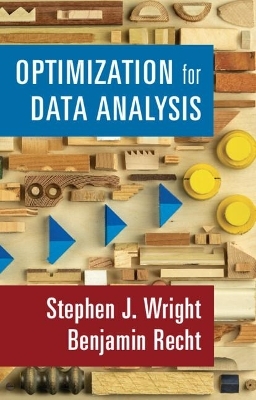

Optimization for Data Analysis

Cambridge University Press (Verlag)

978-1-316-51898-4 (ISBN)

Optimization techniques are at the core of data science, including data analysis and machine learning. An understanding of basic optimization techniques and their fundamental properties provides important grounding for students, researchers, and practitioners in these areas. This text covers the fundamentals of optimization algorithms in a compact, self-contained way, focusing on the techniques most relevant to data science. An introductory chapter demonstrates that many standard problems in data science can be formulated as optimization problems. Next, many fundamental methods in optimization are described and analyzed, including: gradient and accelerated gradient methods for unconstrained optimization of smooth (especially convex) functions; the stochastic gradient method, a workhorse algorithm in machine learning; the coordinate descent approach; several key algorithms for constrained optimization problems; algorithms for minimizing nonsmooth functions arising in data science; foundations of the analysis of nonsmooth functions and optimization duality; and the back-propagation approach, relevant to neural networks.

Stephen J. Wright holds the George B. Dantzig Professorship, the Sheldon Lubar Chair, and the Amar and Balinder Sohi Professorship of Computer Sciences at the University of Wisconsin-Madison and a Discovery Fellow in the Wisconsin Institute for Discovery. He works in computational optimization and its applications to data science and many other areas of science and engineering. He is a Fellow of SIAM, and recipient of the 2014 W. R. G. Baker Award from IEEE for most outstanding paper, the 2020 Khachiyan Prize by the INFORMS Optimization Society for lifetime achievements in optimization, and the 2020 NeurIPS Test of Time Award. Professor Wright is the author and co-author of widely used textbooks and reference books in optimization including Primal Dual Interior-Point Methods (1987) and Numerical Optimization (2006). Benjamin Recht is an Associate Professor in the Department of Electrical Engineering and Computer Sciences at the University of California, Berkeley. His research group studies how to make machine learning systems more robust to interactions with a dynamic and uncertain world by using mathematical tools from optimization, statistics, and dynamical systems. Professor Recht is the recipient of a Presidential Early Career Award for Scientists and Engineers, an Alfred P. Sloan Research Fellowship, the 2012 SIAM/MOS Lagrange Prize in Continuous Optimization, the 2014 Jamon Prize, the 2015 William O. Baker Award for Initiatives in Research, and the 2017 and 2020 NeurIPS Test of Time Awards.

1. Introduction; 2. Foundations of smooth optimization; 3. Descent methods; 4. Gradient methods using momentum; 5. Stochastic gradient; 6. Coordinate descent; 7. First-order methods for constrained optimization; 8. Nonsmooth functions and subgradients; 9. Nonsmooth optimization methods; 10. Duality and algorithms; 11. Differentiation and adjoints.

| Erscheinungsdatum | 01.11.2021 |

|---|---|

| Zusatzinfo | Worked examples or Exercises |

| Verlagsort | Cambridge |

| Sprache | englisch |

| Maße | 156 x 235 mm |

| Gewicht | 450 g |

| Themenwelt | Mathematik / Informatik ► Informatik ► Datenbanken |

| Mathematik / Informatik ► Mathematik ► Angewandte Mathematik | |

| Mathematik / Informatik ► Mathematik ► Finanz- / Wirtschaftsmathematik | |

| ISBN-10 | 1-316-51898-1 / 1316518981 |

| ISBN-13 | 978-1-316-51898-4 / 9781316518984 |

| Zustand | Neuware |

| Informationen gemäß Produktsicherheitsverordnung (GPSR) | |

| Haben Sie eine Frage zum Produkt? |

aus dem Bereich