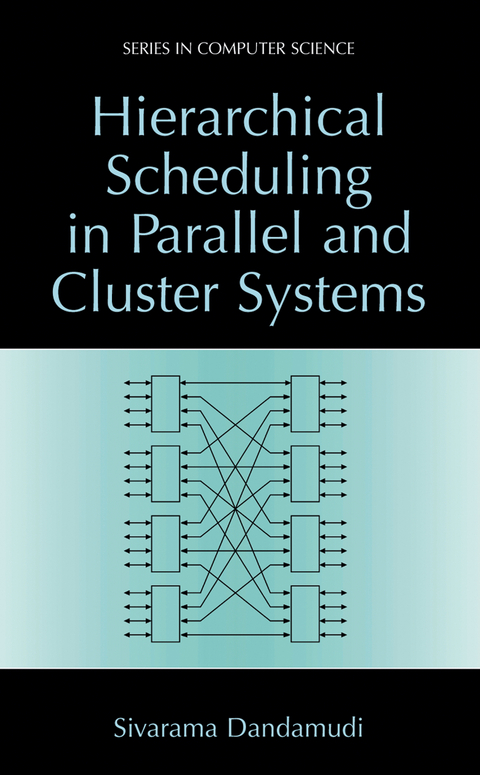

Hierarchical Scheduling in Parallel and Cluster Systems

Seiten

2012

|

Softcover reprint of the original 1st ed. 2003

Springer-Verlag New York Inc.

978-1-4613-4938-9 (ISBN)

Springer-Verlag New York Inc.

978-1-4613-4938-9 (ISBN)

Multiple processor systems are an important class of parallel systems. These systems are called uniform memory access (UMA) multiprocessors because they provide uniform access of memory to all pro cessors. Such systems also provide global, shared memory like the UMA systems.

Multiple processor systems are an important class of parallel systems. Over the years, several architectures have been proposed to build such systems to satisfy the requirements of high performance computing. These architectures span a wide variety of system types. At the low end of the spectrum, we can build a small, shared-memory parallel system with tens of processors. These systems typically use a bus to interconnect the processors and memory. Such systems, for example, are becoming commonplace in high-performance graph ics workstations. These systems are called uniform memory access (UMA) multiprocessors because they provide uniform access of memory to all pro cessors. These systems provide a single address space, which is preferred by programmers. This architecture, however, cannot be extended even to medium systems with hundreds of processors due to bus bandwidth limitations. To scale systems to medium range i. e. , to hundreds of processors, non-bus interconnection networks have been proposed. These systems, for example, use a multistage dynamic interconnection network. Such systems also provide global, shared memory like the UMA systems. However, they introduce local and remote memories, which lead to non-uniform memory access (NUMA) architecture. Distributed-memory architecture is used for systems with thousands of pro cessors. These systems differ from the shared-memory architectures in that there is no globally accessible shared memory. Instead, they use message pass ing to facilitate communication among the processors. As a result, they do not provide single address space.

Multiple processor systems are an important class of parallel systems. Over the years, several architectures have been proposed to build such systems to satisfy the requirements of high performance computing. These architectures span a wide variety of system types. At the low end of the spectrum, we can build a small, shared-memory parallel system with tens of processors. These systems typically use a bus to interconnect the processors and memory. Such systems, for example, are becoming commonplace in high-performance graph ics workstations. These systems are called uniform memory access (UMA) multiprocessors because they provide uniform access of memory to all pro cessors. These systems provide a single address space, which is preferred by programmers. This architecture, however, cannot be extended even to medium systems with hundreds of processors due to bus bandwidth limitations. To scale systems to medium range i. e. , to hundreds of processors, non-bus interconnection networks have been proposed. These systems, for example, use a multistage dynamic interconnection network. Such systems also provide global, shared memory like the UMA systems. However, they introduce local and remote memories, which lead to non-uniform memory access (NUMA) architecture. Distributed-memory architecture is used for systems with thousands of pro cessors. These systems differ from the shared-memory architectures in that there is no globally accessible shared memory. Instead, they use message pass ing to facilitate communication among the processors. As a result, they do not provide single address space.

I: Background.- 1. Introduction.- 2. Parallel and Cluster Systems.- 3. Parallel Job Scheduling.- II: Hierarchical Task Queue Organization.- 4. Hierarchical Task Queue Organization.- 5. Performance of Scheduling Policies.- 6. Performance with Synchronization Workloads.- III: Hierarchical Scheduling Policies.- 7. Scheduling in Shared-Memory Multiprocessors.- 8. Scheduling in Distributed-Memory Multicomputers.- 9. Scheduling in Cluster Systems.- IV: Epilog.- 10. Conclusions.- References.

| Reihe/Serie | Series in Computer Science |

|---|---|

| Zusatzinfo | XXV, 251 p. |

| Verlagsort | New York, NY |

| Sprache | englisch |

| Maße | 155 x 235 mm |

| Themenwelt | Mathematik / Informatik ► Informatik ► Betriebssysteme / Server |

| Mathematik / Informatik ► Informatik ► Netzwerke | |

| Mathematik / Informatik ► Informatik ► Theorie / Studium | |

| Informatik ► Weitere Themen ► Hardware | |

| ISBN-10 | 1-4613-4938-9 / 1461349389 |

| ISBN-13 | 978-1-4613-4938-9 / 9781461349389 |

| Zustand | Neuware |

| Haben Sie eine Frage zum Produkt? |

Mehr entdecken

aus dem Bereich

aus dem Bereich

Eine unterhaltsame Einführung für Maker, Kids, Tüftlerinnen und …

Buch | Softcover (2022)

dpunkt (Verlag)

36,90 €

ein Streifzug durch das Innenleben eines Computers

Buch | Softcover (2023)

Springer (Verlag)

24,99 €